Table of Contents

- 1. Business Requirements for a Robo-Advisor (RA) Platform

- 2. User Interface Requirements for a Robo-Advisor (RA) Platform

- 3. Algorithms for a Robo-Advisor (RA) Platform

- 4. Model Portfolios

- 5. Sample Portfolios – for an aggressive investor

- 6. Technology Requirements for a Robo-Advisor (RA) Platform

- 7. Conclusion

1. Business Requirements for a Robo-Advisor (RA) Platform

Some of the key business requirements of a RA platform that confer it advantages as compared to the manual/human driven style of investing are:

- Collect Individual Client Data – RA Platforms need to offer a high degree of customization from the standpoint of an individual investor. This means an ability to provide a preferably mobile and web interface to capture detailed customer financial background, existing investments as well as any historical data regarding customer segments etc.

- Client Segmentation – Clients are to be segmented across granular segments as opposed to the traditional asset based methodology (e.g mass affluent, high net worth, ultra high net worth etc).

- Algorithm Based Investment Allocation – Once the client data is collected, normalized & segmented – a variety of algorithms are applied to the data to classify the client’s overall risk profile and an investment portfolio is allocated based on those requirements. Appropriate securities are purchased as we will discuss in the below sections.

- Portfolio Rebalancing – The client’s portfolio is rebalanced appropriately depending on life event changes and market movements.

- Tax Loss Harvesting – Tax-loss harvesting is the mechanism of selling securities that have a loss associated with them. By doing so or by taking a loss, the idea is that that client can offset taxes on both gains and income. The sold securities are replaced by similar securities by the RA platform thus maintaining the optimal investment mix.

- A Single View of a Client’s Financial History- From the WM firm’s standpoint, it would be very useful to have a single view capability for a RA client that shows all of their accounts, interactions & preferences in one view.

2. User Interface Requirements for a Robo-Advisor (RA) Platform

Once a customer logs in using any of the digital channels supported (e.g. Mobile, eBanking, Phone etc) – they are presented with a single view of all their accounts. This view has a few critical areas – Summary View (showing an aggregated view of their financial picture), the Transfer View (allowing one to transfer funds across accounts with other providers).

The Summary View lists the below

- Demographic info: Customer name, address, age

- Relationships: customer rating influence, connections, associations across client groups

- Current activity: financial products, account interactions, any burning customer issues, payments missed etc

- Customer Journey Graph: which products or services they are associated with since the time they became a customer first etc,

Depending on the clients risk tolerance and investment horizon, the weighted allocation of investments across these categories will vary. To illustrate this, a Model Portfolio and an example are shown below.

3. Algorithms for a Robo-Advisor (RA) Platform

There are a variety of algorithmic approaches that could be taken to building out an RA platform. However the common feature of all of these is to –

- Leverage data science & statistical modeling to automatically allocate client wealth across different asset classes (such as domestic/foreign stocks, bonds & real estate related securities) to automatically rebalance portfolio positions based on changing market conditions or client preferences. These investment decisions are also made based on detailed behavioral understanding of a client’s financial journey metrics – Age, Risk Appetite & other related information.

- A mixture of different algorithms can be used such as Modern Portfolio Theory (MPT), Capital Asset Pricing Model (CAPM), the Black Litterman Model, the Fama-French etc. These are used to allocate assets as well as to adjust positions based on market movements and conditions.

- RA platforms also provide 24×7 tracking of market movements to use that to track rebalancing decisions from not just a portfolio standpoint but also from a taxation standpoint.

4. Model Portfolios

Equity

- VN Domestic Stock – Large Cap, Medium Cap , Small Cap, Dividend Stocks

- Foreign Stock – Emerging Markets, Developed Markets

Fixed Income

- Developed Market Bonds

- VN Bonds

- International Bonds

- Emerging Markets Bonds

Other

- Real Estate

- Currencies

- Gold and Precious Metals

- Commodities

Cash

5. Sample Portfolios – for an aggressive investor

Equity – 85%

- VN Domestic Stock (50%) – Large Cap – 30%, Medium Cap – 10% , Small Cap – 10%, Dividend Stocks – 0%

- Foreign Stock – (35%) – Emerging Markets – 18%, Developed Markets – 17%

Fixed Income – 5%

- Developed Market Bonds – 2%

- VN Bonds – 1%

- International Bonds – 1%

- Emerging Markets Bonds – 1%

Other – 5%

- Real Estate – 3%

- Currencies – 0%

- Gold and Precious Metals – 0%

- Commodities – 2%

Cash – 5%

6. Technology Requirements for a Robo-Advisor (RA) Platform

An intelligent RA platform has a few core technology requirements (based on the above business requirements).

- A Single Data Repository – A shared data repository called a Data Lake is created, that can capture every bit of client data (explained in more detail below) as well as external data. The RA datalake provides more visibility into all data to a variety of different stakeholders. Wealth Advisors access processed data to view client accounts etc. Clients can access their own detailed positions,account balances etc. The Risk group accesses this shared data lake to processes more position, execution and balance data. Data Scientists (or Quants) who develop models for the RA platform also access this data to perform analysis on fresh data (from the current workday) or on historical data. All historical data is available for at least five years—much longer than before. Moreover, the Hadoop platform enables ingest of data across a range of systems despite their having disparate data definitions and infrastructures. All the data that pertains to trade decisions and lifecycle needs to be made resident in a general enterprise storage pool that is run on the HDFS (Hadoop Distributed Filesystem) or similar Cloud based filesystem. This repository is augmented by incremental feeds with intra-day trading activity data that will be streamed in using technologies like Sqoop, Kafka and Storm.

- Customer Data Collection – Existing Financial Data across the below categories is collected & aggregated into the data lake. This data ranges from Customer Data, Reference Data, Market Data & other Client communications. All of this data, can be ingested using a API or pulled into the lake from a relational system using connectors supplied in the RA Data Platform. Examples of data collected include – Customer’s existing Brokerage accounts, Customer’s Savings Accounts, Behavioral Finance Suveys and Questionnaires etc etc. The RA Data Lake stores all internal & external data.

- Algorithms – The core of the RA Platform are data science algos. Whatever algorithms are used – a few critical workflows are common to them. The first is Asset Allocation is to take the customers input in the “ADVICE” tab for each type of account and to tailor the portfolio based on the input. The others include Portfolio Rebalancing and Tax Loss Harvesting.

- The RA platform should be able to store market data across years both from a macro and from an individual portfolio standpoint so that several key risk measures such as volatility (e.g. position risk, any residual risk and market risk), Beta, and R-Squared – can be calculated at multiple levels. This for individual securities, a specified index, and for the client portfolio as a whole.

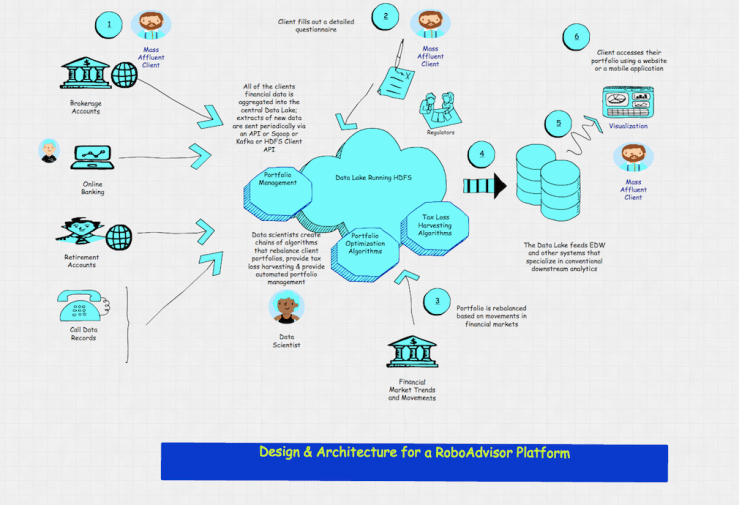

The overall logical flow of data in the system:

- Information sources are depicted at the left. These encompass a variety of institutional, system and human actors potentially sending thousands of real time messages per hour or by sending over batch feeds.

- A highly scalable messaging system to help bring these feeds into the RA Platform architecture as well as normalize them and send them in for further processing. Apache Kafka is a good choice for this tier. Realtime data is published by a range of systems over Kafka queues. Each of the transactions could potentially include 100s of attributes that can be analyzed in real time to detect business patterns. We leverage Kafka integration with Apache Storm to read one value at a time and perform some kind of storage like persist the data into a HBase cluster.In a modern data architecture built on Apache Hadoop, Kafka ( a fast, scalable and durable message broker) works in combination with Storm, HBase (and Spark) for real-time analysis and rendering of streaming data.

- Trade data is thus streamed into the platform (on a T+1 basis), which thus ingests, collects, transforms and analyzes core information in real time. The analysis can be both simple and complex event processing & based on pre-existing rules that can be defined in a rules engine, which is invoked with Apache Storm. A Complex Event Processing (CEP) tier can process these feeds at scale to understand relationships among them; where the relationships among these events are defined by business owners in a non technical or by developers in a technical language. Apache Storm integrates with Kafka to process incoming data.

- For Real time or Batch Analytics, Apache HBase provides near real-time, random read and write access to tables (or ‘maps’) storing billions of rows and millions of columns. In this case once we store this rapidly and continuously growing dataset from the information producers, we are able to do perform super fast lookup for analytics irrespective of the data size.

- Data that has analytic relevance and needs to be kept for offline or batch processing can be stored using the Hadoop Distributed Filesystem (HDFS) or an equivalent filesystem such as Amazon S3 or EMC Isilon or Red Hat Gluster. The idea to deploy Hadoop oriented workloads (MapReduce, or, Machine Learning) directly on the data layer. This is done to perform analytics on small, medium or massive data volumes over a period of time. Historical data can be fed into Machine Learning models created above and commingled with streaming data as discussed in step 1.

- Horizontal scale-out (read Cloud based IaaS) is preferred as a deployment approach as this helps the architecture scale linearly as the loads placed on the system increase over time. This approach enables the Market Surveillance engine to distribute the load dynamically across a cluster of cloud based servers based on trade data volumes.

- It is recommended to take an incremental approach to building the RA platform, once all data resides in a general enterprise storage pool and makes the data accessible to many analytical workloads including Trade Surveillance, Risk, Compliance, etc. A shared data repository across multiple lines of business provides more visibility into all intra-day trading activities. Data can be also fed into downstream systems in a seamless manner using technologies like SQOOP, Kafka and Storm. The results of the processing and queries can be exported in various data formats, a simple CSV/txt format or more optimized binary formats, json formats, or you can plug in custom SERDE for custom formats. Additionally, with HIVE or HBASE, data within HDFS can be queried via standard SQL using JDBC or ODBC. The results will be in the form of standard relational DB data types (e.g. String, Date, Numeric, Boolean). Finally, REST APIs in HDP natively support both JSON and XML output by default.

- Operational data across a bunch of asset classes, risk types and geographies is thus available to investment analysts during the entire trading window when markets are still open, enabling them to reduce risk of that day’s trading activities. The specific advantages to this approach are two-fold: Existing architectures typically are only able to hold a limited set of asset classes within a given system. This means that the data is only assembled for risk processing at the end of the day. In addition, historical data is often not available in sufficient detail. Hadoop accelerates a firm’s speed-to-analytics and also extends its data retention timeline

- Apache Atlas is used to provide Data Governance capabilities in the platform that use both prescriptive and forensic models, which are enriched by a given businesses data taxonomy and metadata. This allows for tagging of trade data between the different businesses data views, which is a key requirement for good data governance and reporting. Atlas also provides audit trail management as data is processed in a pipeline in the lake

- Another important capability that Big Data/Hadoop can provide is the establishment and adoption of a Lightweight Entity ID service – which aids dramatically in the holistic viewing & audit tracking of trades. The service will consist of entity assignment for both institutional and individual traders. The goal here is to get each target institution to propagate the Entity ID back into their trade booking and execution systems, then transaction data will flow into the lake with this ID attached providing a way to do Client 360.

- Output data elements can be written out to HDFS, and managed by HBase. From here, reports and visualizations can easily be constructed. One can optionally layer in search and/or workflow engines to present the right data to the right business user at the right time.

7. Conclusion

As one can see clearly, though automated investing methods are still in early stages of maturity – they hold out a tremendous amount of promise. As they are unmistakably the next big trend in the WM industry industry players should begin developing such capabilities.